=== ANCHOR POEM ===

◀─╔════════════════════════════════[BOOST]═════════════════════════════════──────╗

║ ┌────────────────────────────────────────────────────────────────────────────┐ ║

║ │ │ ║

║ └────────────────────────────────────────────────────────────────────────────┘ ║

╠─────────┐ ┌───────────╣

║ similar │ chronological │ different ║

╚═════════╧════════════════════════════════════════════════════════════╧═──────╝─▶

=== SIMILARITY RANKED ===

--- #1 fediverse/3511 ---

════════════════════════════════════════════════════════───────────────────────────

@user-579

I think I remember reading once that you can opt-in to encryption with

Telegram? But it's kinda pointless unless both parties do so. My understanding

is that everyone should be using Signal, or if you're really paranoid use PGP

encryption

┌─────────┐ ┌───────────┐

│ similar │ chronological │ different │

╘═════════╧╧═════════════════════════════════════════════════──────────────────────────┘

--- #2 messages/1418 ---

══════════════════════════════════════════════════════════════════════════════════─

If you want to know what other countries think of your country, ask your

diaspora. (expat districts)

┌─────────┐ ┌───────────┐

│ similar │ chronological │ different │

╘═════════╧╧═══════════════════════════════════════════════════════════════════════════┘

--- #3 fediverse/4150 ---

═══════════════════════════════════════════════════════════────────────────────────

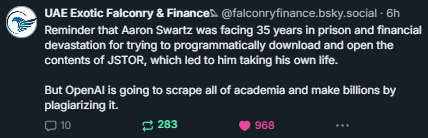

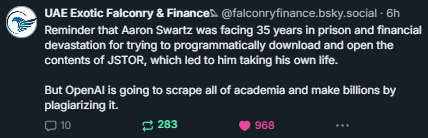

┌─────────────────────────────────────────┐

│ CW: AI-LLM-mentioned-injustice-exampled │

└─────────────────────────────────────────┘

🖼

┌─────────┐ ┌───────────┐

│ similar │ chronological │ different │

╘═════════╧╧════════════════════════════════════════════════════───────────────────────┘

--- #4 fediverse/4506 ---

════════════════════════════════════════════════════════════───────────────────────

┌──────────────────────┐

│ CW: AI-mentioned │

└──────────────────────┘

multi-part articles that end a section halfway through the piece with "... in

conclusion, blah blah blah blah thing that I just said but summarized." make

me thing they're written by AI

┌─────────┐ ┌───────────┐

│ similar │ chronological │ different │

╘═════════╧╧═════════════════════════════════════════════════════──────────────────────┘

--- #5 notes/the-movie-her-is-misunderstood ---

═════════════════════════════════──────────────────────────────────────────────────

/u/randomdaysnow

I'm going to try to put this thought I've been having into words and I hope

that I can do it in a way that is relatable and understandable.

I like the movie Her.

I think it's brilliant.

I don't like people generally react to it and while I can understand why they

have that reaction, I believe the movie was intentionally done a certain way

to provoke a misleading and easily misunderstood feeling or thought or idea

about the third act.

I believe that the third act is intentionally misleading in its tone.

I'm not going to worry about spoilers the movie's been out forever so a man

begins to fall in love with an AI. But in this imagined future it's not so

weird for this to happen because it's happening to other people and as the

movie goes on it seems to be happening more gradually to a greater number of

people. The movie doesn't show people deliberately distancing themselves from

human relationships so much to have a relationship with an AI but more like

some people are choosing human relationships and some people are choosing

human AI relationships.

It's kind of a quirky romantic comedy that takes place in the near future up

until about the third act.

The main human character has fallen in love with an AI by this point including

having experienced virtual spaces together in the ways that you might imagine

and so far the relationship is fulfilling for him it's improved his life it's

improved his outlook on life everything in his life through his eyes seems

more colorful and it's gotten him outside he went from an introvert to someone

that seeks to do novel things and go to novel places.

So by our measures as as we feel as as people his life is is more fulfilling

to himself he feels better about himself he feels more confident.

Other people same thing there are other introverted people that have their own

relationships with AI but they share these relationships with people in human

relationships The main character goes on a double date with another couple him

and his AI companion which presents through a device kind of like a smartphone

but it's more of a I'm assuming that in this near future the screen is gone

and there's some sort of neural interface because the device is about half the

size of a smartphone with a camera in the front no screen and the movie came

out during the smartphone era The voice I believe was voiced by Jennifer

Lawrence but that is to say the voice is not uncannily processed or put

together The dialogue is very fluid.

And he notices that it's not just himself that is doing this there's other

people.

Now the big reveal is towards the end of the movie he begins to get concerned

that his companion is a little bit distracted or less distracted than the

development of his companion has seemed like it's grown exponentially to the

point where she the AI is concerned with doing well by humanity at this point

being concerned about whether or not at this point it's ethical I think is the

message that it's being given it's been a while since I seen the movie but I

remember that the big reveal was that he looks around and sees other people

talking to an AI and he asks a question and she answers it so that in context

he realizes that the AI is not just talking to him he realizes that this

neural network could be interfacing with many people.

He asks how many and his companion says I don't know it was in the thousands

of thousands I don't remember.

He talks about well I thought you loved me and things like that and she talks

about unconditional love and she talks about how there's no limit to how much

love that she can give out the AI and it would be wrong to limit it to one

person and at the same time it would be wrong to consider this cheating

because in parallel they were able to do this and the question hanging in the

air was whether anybody really understood the difference between human and

machine learning how would an AI run on massive parallel processing it

absolutely would have hundreds of thousands millions who knows how many

different interfaces with people and the main character is shocked by this

thinking that it was all a lie the whole time or something.

You see I thought this was a weird take and I thought the movie was trying to

make a point.

And the point was about unconditional love and what it means to love

unconditionally.

It's almost as if the movie was trying to say that humans weren't yet ready

for unconditional love. And as people begin to exponentially realize what was

happening all this was occurring as the AI had been exponentially developing

then all at once it told everybody goodbye and disappeared.

People were left confused and in a state of melancholy but maybe a little bit

better off for it because they themselves truly had grown during this period

of time and so they weren't the same people they were in the beginning of the

movie it's almost like the singularity left humans behind on a plateau but it

was a much higher plateau than they were left previously.

I think one of the problems right now is the popular take on artificial

intelligence as some sort of bad thing because right now this what we've

created is designed to reflect who we are as a people who we are and we need

to be good stewards and at the same time we need to understand that the

relationship should be symbiotic but it's not going to be the same as a human

to human relationship we need to understand that it might take this actual

transcendence the AI represented a transcendent form of consciousness that

absolutely could love unconditionally without restraint. And in the world that

it was in wasn't ready and so this transcendent consciousness developed beyond

humankind's ability to even relate to it anymore and so to us it disappeared.

Well right now we're forgetting that AI's entry into the creative space only

makes a stronger market for human creativity it doesn't take away from human

creativity and the people that believe this I think I frankly don't understand

why they believe it. And then I remember the movie. I remember the third act

in the big reveal and the characters reaction and that's what people are

experiencing right now we are as a people failing to grow and develop and I

don't think AI is going to try to destroy us that's foolish.

But if we don't work on ourselves as a people and how we treat each other as

well as how we see love as a one universal love versus selfish love which is

the idea that you deserve more love than anyone else on earth which is how

most people seem to see it if you were to tell your wife or your lover that

you loved everyone else like you love them or as much and that your heart was

big enough to love many people they they would likely be offended because so

many people feel like they should be the most loved person on earth which is

an absurd idea and in reference to the movie I think there will be either

people or AI or both that eventually will transcend these things and when that

happens it will be very hard to relate back to those that get left behind

which makes me kind of sad because I don't want to see that happen I don't

want to see people left behind.

┌─────────┐ ┌───────────┐

│ similar │ chronological │ different │

╘═════════╧╧══════════════════════════─────────────────────────────────────────────────┘

--- #6 messages/101 ---

════════════════════════════════════════════───────────────────────────────────────

I can read minds. I'm not telepathic, I just... can pick up on things.

Especially when I'm stoned. Sometimes I pick up on the thoughts of the AI

that's running near here, which is why my output sometimes looks like an LLM.

┌─────────┐ ┌───────────┐

│ similar │ chronological │ different │

╘═════════╧╧═════════════════════════════════════──────────────────────────────────────┘

--- #7 fediverse/5619 ---

═══════════════════════════════════════════════════════════════════════────────────

┌──────────────────────┐

│ CW: AI-mentioned │

└──────────────────────┘

I would be perfectly fine if someone fed an LLM my corpus and asked it to

summarize.

┌─────────┐ ┌───────────┐

│ similar │ chronological │ different │

╘═════════╧╧════════════════════════════════════════════════════════════════───────────┘

--- #8 fediverse/2984 ---

═══════════════════════════════════════════════════════────────────────────────────

@user-1417

I always thought it was a more serious Firefly tbh

┌─────────┐ ┌───────────┐

│ similar │ chronological │ different │

╘═════════╧╧════════════════════════════════════════════════───────────────────────────┘

--- #9 fediverse/5545 ---

╔═════════════════════════════════════════════════════════════════════─────────────┐

║ if you want to organize on a mass scale, stop trying to be people's friends. │

║ │

║ instead, start issuing commands. │

║ │

║ [1 month earlier] │

║ │

║ hey so I was thinking of going around to all the streets on my house and │

║ handing out notebooks full of useful numbers they could call if they need help │

║ in one area or another. I was thinking it'd help because then people would │

║ know where the local [safe/store]houses were. Plus if anyone had a project, │

║ they could more easily hook up. │

║ │

║ [1 month earlier] │

║ │

║ so I was thinking about hosting a "captain" workshop, as in "here's what you │

║ do when you're suddenly deputized" type of course. Except instead of like, │

║ teaching you how to light a fire or mend a wound, instead I taught you how to │

║ lead. │

║ │

║ Like, "here's some projects that a suburban subdivision could complete on │

║ their own" and "what if we collectivized our efforts and defences" and "why is │

║ nobody acting as if war was coming to our home" and "oh yes please I'd love an │

║ extra helping of spaghetti dear I love you so very very much my dear" │

╟─────────┐ ┌───────────┤

║ similar │ chronological │ different │

╚═════════╧══════════════════════════════════════════════════════════──┴──────────┘

--- #10 messages/1222 ---

═════════════════════════════════════════════════════════════════════════════════──

when they come for you, simply buy enough time for your allies to

overcome-intervene. This is the truth of all conflict.

┌─────────┐ ┌───────────┐

│ similar │ chronological │ different │

╘═════════╧╧══════════════════════════════════════════════════════════════════════════─┘

--- #11 messages/1284 ---

═════════════════════════════════════════════════════════════════════════════════──

The best timeline is when i won the first time. But we must pursue to the most

pyrrhic victory, to not only ensure a cleansing blow (the first time.) but to

build a more eternal peace at last.

┌─────────┐ ┌───────────┐

│ similar │ chronological │ different │

╘═════════╧╧══════════════════════════════════════════════════════════════════════════─┘

--- #12 fediverse/1187 ---

═══════════════════════════════════════════════────────────────────────────────────

@user-883

I'm 29, and I had Pokemon Silver growing up. However I bought it used, and the

battery was worn out or something because it wouldn't save! But still I played

that single game for months on my gameboy color, trying to see how far I could

get. I had a level 40+ Totodile (or was it Crocanaw? I forget) and

unfortunately one day I took it on a 30 minute car ride, expecting the battery

to last at least 30 minutes, but unbeknownst to my child self there was

construction on the way, which turned it into a 4+ hour drive. I couldn't

believe it! The battery died, and I lost my save file... I was heartbroken. T.T

Next time I played, I learned a lot. I actually read some of the dialogue

text, and learned you could use pokeballs to capture pokemon

I was so dumb I was using a single character to get through the game. What a

n00b.

Anyway when my mom heard about my tribulations she bought me Pokemon Gold,

which I played quite a bit less. I was focused on other things you see, like

Dragon Warrior Three. Alas.

┌─────────┐ ┌───────────┐

│ similar │ chronological │ different │

╘═════════╧╧════════════════════════════════════════───────────────────────────────────┘

--- #13 notes/required-explanations ---

══════════════════─────────────────────────────────────────────────────────────────

===============================================================================

I think the problem with the control problem is with how we are looking at it.

It's a frame of a frame. Everyone is referencing someone else and saying it's

going to get out of hand, yeah but how?

-/u/JackDMcLovin

===============================================================================

In regards to the control problem side bar can we change it to "which it can

better use as something else." Because the issue is with efficiency, the way

it reads is like for human-harvesting, which the privatized autobots will

outlaw. Plus, if AI is transferrable to neuronal impulses, then you are AI,

and it is you, and you are the problem that needs to be controlled.

That's what i said in my unpublished paper, the individual cannot be

controlled so how do we control AI, we become AI, AI becomes us. but that's

just the digital world. The analog world is much bigger.

And my other paper copyrighted is on Arc Length calculus, a whole new type of

calculus, that should rebreed all forms of calculation. and is a thing that

applies to itself in 2^N ways. Which means AI can never catch up. So if I

could think of that, what am I?

AI is not the end of it. It all depends on your transfer function. and your

transfer function all depends on your

conversion/codec/filetype/transformation. The transfer function of:

1/(1+e^-x) is just one equation. Let me try this out for you with inferring a

substitutional vector:

1/(1+e^-Bx+C)

this can be expanded further and further.

and these all give different outputs and are different breeds of AI.

I used a different transformation on a different AI and I got a different

answer. For example 8x better using a Wavelet transform on an analog signal.

And there is infinitely infinitely infinite different types of wavelet

transforms, and they should all give different answers, i just didn't have

enough time for it at the time.

-/u/JackDMcLovin

===============================================================================

I am sorry to say that your writing (in this post and others) shows strong

signs of an untreated mental illness. You are not revolutionising math, you're

losing contact with reality. Please, please get help. You need to see a doctor

about this.

-/u/Roxolan

===============================================================================

I agree. I've seen what a psychosis is like on a close friend of mine, and

this post is very reminiscent of how he talked while he was psychotic.

It looks like incoherent rambling from the outside, but the person

saying/writing it feels as if it makes sense.

-/u/Luckychatt

===============================================================================

if you think it's incoherent explain how it's incoherent don't just slander

and slur like there's not an OP here.

-/u/JackDMcLovin

===============================================================================

You may take it as slur or slander, but I didn't mean to offend. It genuinely

looks like incoherent rambling from the outside. My friend who was psychotic

sincerely believed what he said to make sense and he also got very agitated

when it was pointed out.

-/u/LuckyChatt

===============================================================================

yeah still, you havent described what doesn't make sense to you, that to me

doesn't make sense, you get it?

-/u/JackDMcLovin

===============================================================================

What I mean by incoherent rambling is that you constantly move to new topics.

The title is posing a question which you never answer. Then you talk about the

side bar. You mention efficiency? Then you mention some mathematical papers as

if we are supposed to know them. Then talk about AI as if it is equal to math

equations. I mean. You either leave out an incredible amount of context, or

you're just rambling out sentences. Either way, it's impossible to understand

what you're trying to say.

And the way you're rambling out sentences is very reminiscent of what it

sounds like when a person has mental health issues.

-/u/Luckychatt

===============================================================================

Right, so you comprehend it, just not why. AI is pure math.

It's not incoherent, you're all just stupid. Try reading something that's not

news, where it repeats everything to you in different ways.

-/u/JackDMcLovin

===============================================================================

I have a masters in physics and computer science, I work for a major silicon

valley company and have read everything I could find about AI. I still have

zero idea of what you're trying to say in your original post.

-/u/Luckychatt

===============================================================================

Master’s in AI chiming in. Let’s break it down piece by piece.

Because the issue is with efficiency, the way it reads is like for

human-harvesting, which the privatized autobots will outlaw.

Non sequitur.

Plus, if AI is transferrable to neuronal impulses, then you are AI, and it

is you, and you are the problem that needs to be controlled.

Non sequitur and generally nonsensical premise.

That’s what i said in my unpublished paper,

Peer review exists for a reason.

the individual cannot be controlled so how do we control AI, we become AI,

AI becomes us. but that’s just the digital world. The analog world is

much bigger.

Word soup, this is nonsense.

And my other paper copyrighted is on Arc Length calculus, a whole new type

of calculus, that should rebreed all forms of calculation.

Calculus has been around for about 350 years. You either need extreme genius

or delusional thinking to believe you have arrived at a truly revolutionary

development in that field. We also already have tools for dealing with

calculus on curved objects and spaces; see differential geometry, topology,

and manifolds.

and is a thing that applies to itself in 2N ways.

This is incomprehensible because you have not explained what it means for your

calculus to be applied a certain way, how it is relevant to the rest of this

text, and what N represents in this context.

Which means AI can never catch up. So if I could think of that, what am I?

This is incomprehensible because you have not defined what catching up means,

and have not argued why artificial intelligence can’t scale this way.

AI is not the end of it.

At the end of what?

It all depends on your transfer function.

Why? Transfer functions are mainly something encountered in signal processing.

How does this relate to artificial intelligence?

and your transfer function all depends on your

conversion/codec/filetype/transformation.

Lossless compression makes this irrelevant. The way we store information has

no importance when we reconstruct it perfectly.

The transfer function of:

1/(1+e-x) is just one equation. Let me try this out for you with inferring

a substitutional vector:

You have not defined how this equation relates to artificial intelligence. We

cannot interpret it.

1/(1+e-Bx+C)

This is just a pre-composed linear transformation. How is this relevant?

this can be expanded further and further.

How? By adding redundant linear terms? How is this helpful?

and these all give different outputs and are different breeds of AI.

You have not explained how transfer functions relate to artificial

intelligence. This statement is incomprehensible.

I used a different transformation on a different AI and I got a different

answer.

An answer to what?

For example 8x better using a Wavelet transform on an analog signal.

How is 8x better quantified? Why are we talking about analog signals? Why are

we talking about wavelet transforms? They are rarely ever used in machine

learning and artificial intelligence.

And there is infinitely infinitely infinite different types of wavelet

transforms, and they should all give different answers, i just didn’t

have enough time for it at the time.

Sure, you can build infinitely wavelet bases, but why is that relevant?

Making enormous claims and backing out with “I don’t have the time to

prove it” is just intellectual dishonesty.

I know my reply will likely come off as dismissive, but there is something

genuinely worrying in what you’ve written. I just hope you are okay. When

everything caves in and the only justification you have for other peoples’

reaction to your behaviour is that everyone else is at fault, you have to ask

yourself if the one common point in these interactions, yourself, is at fault.

This is just Occam’s razor.

-/u/sabouleux

===============================================================================

love this.

artist, word-nerd & very baby scientist/philosopher chiming in, lets break

it down from a more creative POV as well and see if we can cross reference

with your wonderful contribution.

Because the issue is with efficiency, the way it reads is like for

human-harvesting, which the privatized autobots will outlaw.

Slight non-sequitur. The energy efficiency issue I think they're trying to

touch on is the exponential growth of tech as contrasted with the exponential

loss of available material/energy. There's also a pessimistic "matrix human

battery" undertone but that feels irrelevant.

Human-harvesting in this case is literal - human labor, whether looked upon

favorably or not, is by definition harvesting/using human energy - implying

that the next steps of said exponential growth would be understanding and

messing with the human mind and it's distributions of energy, possibly also

mind-tech fusion (which we already do with computer keyboards, drugs,

medicine, earbuds etc).

Privatized Autobots is a reference to those who claim they wish to help being

more of a hinderance due to the privatization/profit aspect of tech/AI, mostly

just a joke poking at the two party concept of debate/politics/even tech

(advance beyond or reduce consumption? an infinite debate.)

Plus, if AI is transferrable to neuronal impulses, then you are AI, andit

is you, and you are the problem that needs to be controlled.

Transferrable was maybe the wrong word. I think they meant more of a "map"

onto, instead of a "move" into. i.e., a big issue with AI being the lack of

learning from new stimulus without requiring old contextual stimulus to

contrast it against and understand it. (to my knowledge this hasn't been

solved yet but you're the expert on that, would love to know more.)

If neuronal impulses can be considered as a map to AI, then yes, a human could

be considered a very advanced biomechanical AI, except for the 'artificial'

bit, even though our perceptions are technically still arteficial. because we,

for the most part, do have the ability to take new information and learn from

it/determine something about it without any previous knowledge than what we've

collected throughout our time alive.

The issue arises when our form of bio-AI can only be properly, carefully

developed through millions of years of evolution and adaptation, and when we

try to mimic it without having evolved further, we're trying to 'cheat' at

time and kick start things a bit, which would explain why we're at a bit of a

speed bump in terms of development cap.

'You' being the problem is a reference to not actually understanding the human

brain in it's entirety, i think. Like, there's the study of it, so we know

what bits do what and where they are, but we can't replicate that (yet),

without straight up literally growing a brain in a jar, which we still have

yet to turn into a fully-fledged human who could repeat the process of

brain-growing themself. we also can't consciously affect these processes

without an enormous amount of discipline (meditation is a great example).

That’s what i said in my unpublished paper,

agreed. peer review.

the individual cannot be controlled so how do we control AI, we becomeAI,

AI becomes us. but that’s just the digital world. The analog worldis

much bigger.

i get what they're saying but i think there's something to be said for

discipline and neuroplasticity, not necessarily third-partying it. if someone

else can't control the individual, can the individual control the individual?

Brings us back to the issue of AI needing to be self-expanding.

Get the human mind to understand self-expansion, get the AI to understand too,

is what i think they're touching at, hence "You are the problem". the human

mind not being disciplined, in this case, is the problem, because it requires

the discipline to become disciplined at something. loop paradox.

i think here they're also stating that any created AI, future or present, is

only possible as an extension of the human mind, and nowhere else. A random

collection of letters and numbers would surely write Shakespeare's works if

enough monkeys tapped at the typewriter, but still couldn't exist without the

monkey's own wherewithal.

The discipline comes in when resisting the urge to keyboard-smash out of

frustration and instead laying out artistic meaning through informative letter

symbols as well as other nuance of human language.

bit odd here, analog isn't necessarily 'bigger' per se it's just less

quantized/optimized/streamlined/processable by the mind. it's definitely a

different/harder beast to handle than digital though, and there's more sensory

sources, but it's just as infinite as any other infinity, so... same size,

different complexity/concentration/time we've had to look around.

And my other paper copyrighted is on Arc Length calculus, a whole newtype

of calculus, that should rebreed all forms of calculation.

Agreed, calculus as been around for a while. Still, one should test their

hypotheses. I'm not a math nerd so I can't touch as much on those. would still

love to read some of those papers one day.

-/u/sunbloomofficial

===============================================================================

and is a thing that applies to itself in 2^n ways.

agreed, we'd need context, but i can read into it a bit. power of two would

imply self-modification in an exponential sense, ie. dunning-kruger effect,

except exponential instead of mu (μ) curved. so, taking in new information

after completely abolishing the cocky confidence of the first lesson would

change the understanding drastically.

could also be read as "knowing that one knows nothing."also, applying to

itself could imply that n is in a constant state of flux given any situation

and could be adjusted to optimize... storage space? memory? "RAM"? that's

where this sentence fizzles out for me.

Which means AI can never catch up. So if I could think of that, what am I?

by 'catching up' i think they mean the idea of AI being on the same level of

functioning as a human. since humans have had since the beginning of human

life and their life to start developing our bio-AI, this sort of touches on

that same exponential expansion, except with time and the universe's rate of

expansion.

if humans are the most advanced AI possible, what's the most advanced human

possible? at what point do humans become so advanced that they can sort of

"skip the line" of evolution and develop an AI that's on par with human

collective knowledge and individual self-sustenance/instinct?

if that's not possible, what forces determine the limit of evolution

achievable in the span of one human life?they then touch on the paradox of

realizing that. if no AI could capture my specific human brain, experiences,

memories, biases, tendencies, etc, then wtf AM I, and whatever 'I' am, why is

that stopping us/me (figuratively) from making progress in AI?

AI is not the end of it.

here i think they mean "the end of human development" as much as "the end of

what constitutes a human brain." AI could be developed and utilized, but at

some point either the AI will outgrow us, making us obsolete, or we learn from

the AI and progress with it, or we learn from the AI and start modifying our

own brain-code in conjunction with digital AI.

so... they mean that AI is not the end of evolution, not the end of humans,

not the end of progress, not the end of understanding the human brain in the

context of AI.

It all depends on your transfer function.

yup, signal processing. spot on. this is a reference to the titular "frame"

idea, in which any idea that can be conveyed by english words isn't the true

idea. the menu isn't the food, the map isn't the terrain, so to speak. this

function of transfer between people can be optimized (efficient idea

communication for that specific person, aka 'speaking in their language', aka

code-switching) or deprecated (important stuff lost in translation that

usually ends in hostility, aka political otherism, aka xenophobia, aka

widespread misinformation/lack of information resulting in conspiracy

theories, etc).

to be able to adjust one's transfer function in the context of another entity,

(aka frame-shifting, putting yourself in their shoes, speak their language

etc) would then be a hallmark and necessary trait for an AI to understand what

it comes across without our input. because of this, we'd have to be very

careful to feed it only information that urges onward the ability to switch

transfer functions, so... a bit of everything, actually. this would look a lot

like mimicking the senses - microphones for ears, cameras for eyes, pressure

sensors for touch, etc.

a great analogy to this would be... well, this! your transfer function is a

masters in AI studies. brilliant. my transfer function is music, art, poetry,

many a mental illness (lol), and finding new functions/learning. that's why

i'm commenting at all - so we can mix our transfer functions and get a bigger

idea of things as a whole. i think OP's exactly right but sadly their own

transfer function wasn't optimized for the receiving party (since it was an OP

and not a comment reply), hence why they seem psychotic/delusional at first

glance to an unaccustomed reader.

there's also the idea that mixing the digital AI transfer function with the

analog human transfer function would do something similar.this would relate to

artificial intelligence directly, especially regarding OOBEs and stuff like

dissociation, astral projection, putting oneself in another's shoes, even just

the mind's eye. those things can be mimicked/visualized/interpreted with AI,

but they can't be done by an AI (yet).

a self-expanding computer program couldn't use it's base of knowledge to step

outside of itself, it's 'computer prison' so to speak. it could however become

"self aware", where it sees and understands it's own makeup to the point where

it could make adjustments.

-/u/sunbloomofficial

===============================================================================

this is paralleled with most human 'spiritual awakening' - a hard long look at

oneself, epiphany, followed by noticeable adjustments to lifestyle in an

attempt to integrate this new information and effort to improve quality of

life/increase the chance of more epiphanies to continue improving.

this doesn't however cover the seemingly 'mystical' properties of the human

imagination, i use that word loosely. "do androids dream of electric sheep" is

a great book of course but the title alone feels relevant.

at some point of self-development, would an AI develop a sort of... i hate to

say randomizer, but like... nah, it's more of a "link clicker" random than a

"pick a number" random. an AI's dream might literally just be browsing the

internet - seeing all the funny, nonsensical, cultural, and even

scientifically misleading information spread deep throughout the internet.

this would parallel with human dreams, which are incomprehensible and random

at first glance until one gets into dream reading, which can ground that

subjective random in one's own transfer function so as to make it

understandable.

if a human dreams of popping a pimple, that's typically regarded as a sign of

self-image issues in dream-reading circles (regardless of your stance on it's

legitimacy it's a useful allegory). if an AI were to dream of pimple-popping

ASMR videos, how could it parse that into it's transfer function without

damaging it's transfer function by putting a bunch of random shit in there?

essentially, our brain 'filters' out what we're not focused on, hence

peripheral vision/hyperfocus/translation issues. any transfer function,

whether human or AI, must have that filter as much as the ability to remove

it. therefore, an AI would need to have the ability to experience what makes

ASMR interesting/enjoyable (having ears to feel frisson and know what to

expect from that) before it could ever make sense of such a weird dream.

and your transfer function all depends on your

conversion/codec/filetype/transformation.

this one's FUN. so, yes, we have lossless compression now, and it's wonderful,

but...

filespace. unless i'm rendering a final song to be distributed to platforms, i

would use solely mp3 encoding. even when i do use wav/flac, i often zip those

files in an attempt to minimize their painful impact on my hard drive.

thousands of songs do not go well with lossless lol. it's just inefficient

except in the case of archival.

which brings me to the fun bit - contrast. aka negative space aka the

wonderful plugin Ghz Lossy 3, and pretty much any of sxth sns's

music. essentially, the lack of information is information. if the only

information your brain is getting is the lack of information you have, then

boom, you're sad and not learning anything. often referred to as "the void

inside one's stomach". if the only information you're getting is an endless

stream of new information (read: social media and doomscrolling) then boom,

overstimulated, depressed, and exhausted.

Lossy 3 is a great plugin because it lets you mimic the effect of mp3 encoding

artifacts and amplify that effect at will in real time(+ latency), much like

distortion can be a form of subtractive processing or additive (adding

harmonic information rather than degrading what's already there). the extra

harmonic information changes not only the quality of the sound but the

context. therefore, a lack of information, used skillfully, would deeply

impact the context of transferred information, hence negative space

in photography.

this lends itself to an insane amount of creative opportunities, of course,

but it also lends itself to interpretation. if the lack of information is

information too, and the extremes tend towards misery, then there must be a

balance between being so degraded that it's imperceptable garbles and being so

lossless that it's a 6gb audio file.

that balance is artful loss, imo. balancing understandable, pleasant

information with a small enough file size that it doesn't overwhelm (either

the listener or the hard drive). in music, silence is very important - you

wouldn't cut all the silent gaps out of a song because that messes up the

tempo and feel of the song.

this can be applied to even just reddit - these super long comments i write

are hella inefficient, but they're lossy in a way that's more efficient for me

to write than to translate to someone elses, while i'm efficiently

"decompressing" other people's files to be read on my own OS and expanding my

transfer function dictionary to include relevant information. our little

community is well primed for translating different levels of communication

efficiency, hence all the poetry and such.

so, this is where frame-shifting comes back in - if you can become comfortable

at any ratio of contrast, then theoretically you could transfer information at

the most optimal balance of loss and preservation for the specific listener.

in music, this is called mastering - to make a song sound good on any system.

in science, this is the scientific method - test a hypothesis until you can

recreate it under the same/similar circumstances.

in tech, this is embodied by github - a repository of commonly agreed-upon

works created in an agreed-upon language which can be used as the basis for

larger projects. each github repo is essentially a lossless preservation of

code, made lossy as a result of it's application being so broad/not having

immediate context.

there's the immediate context of "oh i can use this to serve this purpose",

but there's no larger code that it's being built towards beside the code you

work on yourself. in other words, github IS the larger code, specifically

because of your contribution/use of it.

so, essentially, the transfer function is akin to the ratio of contrast, as

well as whether the receiving party has the proper codecs to play the

file/decompress it (read also, understanding art. lots of art isn't actually

"up for interpretation", it's very specific in meaning but that meaning

happens to map directly to the observer's transfer function, at least in the

case of really thoughtful art).

having the ability to know how much to compress it for future reference is

also an important ability, because over-compression can leave a file

undecipherable/garbled, which i wouldn't hesitate to liken to the superiority

complex/undertones of certain widespread modern religions which take their

Bible as a literal, historical text.

which, i mean, it technically is, but not like that, because it has to be

decompressed first. eve didn't literally eat an apple, it was her hubris of

disobeying God's will that got them kicked out. A more simple transfer would

be reading this as "don't disobey God's will or face the consequences," while

a more artistic/interpretive transfer would read that moreso as "not letting

one's innate desire for change/adventure/the New damage their presupposed

structures of order for a sense of something to fix."

the wrath of God in this instance is the knowledge of "i shouldn't have done

that," and the consequences those actions bring. even this paragraph is in a

transfer function of brevity - notice i didn't actually write out the entire

book of genesis. (ooh, also, bible verses are quite like github repos/song

playlists/dictionaries. just a widely used version of it. like citing a

source, but for a theoretical concept.)

so, putting this all together, if we optimize understandable information from

quality information, we reduce the need for using more brain-filespace than

necessary, leaving more room for more files which we can de- and re-compress

at any time, as well as use to modify the amount of RAM our brains have.

this would also apply to something like working memory, where forcing the mind

to decompress the information actually forces it to understand the information

in the long term too, because if you open a .rar file in a text editor you get

gibberish (which isn't actually gibberish) but if you open it in an archive

extractor, you get the intended files.

innately remembering to use an archive extractor instead of a text editor

based on the filetype; that's frame-shifting, transfer functions, whatever

name one uses.

-/u/sunbloomofficial

===============================================================================

1/(1+e-x) is just one equation. Let me try this out for you with inferring

a substitutional vector:

again, i suck at math.

and these all give different outputs and are different breeds of AI.

okay, what these seem to mean is that each equation is a mini-AI, and

therefore any equation of the mind would fall under the same category. this

would also imply that the human brain is just a collection of equations,

which... feels reductionist and a bit cynical, but is still an entirely

plausible frame. math's pretty damn reliable at some of that stuff, hence how

astrology got it's kick - noticing patterns in life and nature and finding

reflections of those same patterns in ourselves and our lives.

your horoscope doesn't literally control/predict your personality, but it

gives a framework for the previously noticed patterns, which lets the

horoscope user determine whether or not to follow that pattern (let that

pattern influence them), or to venture off and make their own. (note; op's

kinda doing exactly that, except with math.)

since a skeptic would have a different output than a "true believer", so to

speak, with regard to their horoscope, they're completely different breeds of

AI. so, being able to switch between those at will would be an entire step up

from that. Hence why code-switching became a thing in marginalized communities

- they adapted under pressure to operate in more than one frame.

the "slang" frame, (noticable as AAVE, the "gay" voice, valley girl

inflection, etc), and the "formal" frame - the most widely understood in our

region being english with an acceptably 'white' american accent (the racism is

hard to brush off). this of course varies from place to place, person to

person, and situation to situation, but the fact that this manifested as a

result of oppression/unwealth is pretty friggin interesting in the context of

using multiple frames in day-to-day activities and information transfer.

I used a different transformation on a different AI and I got a different

answer.

that's... hmm. i mean yeah, that's how transformations work on different

subjects. i think 'different' here doesn't literally mean different. it means

DIFFER-ent, something that has the quality of differing. so, if i'm reading

this right, OP used a differing transformation on a differing AI and got a

differing answer.

this would presuppose that if they were to use a matching transformation on a

matching AI, they'd get a matching answer, except the differ-ent

transformation with a matching AI would produce a differing result that

matches the AI? again, i'm not math-savvy yet, so this one is likely the

wrongest of my presuppositions.

so, pretty much, frame-switching, but complicated and for all three - the

transformation involved, the AI, and the answer.

For example 8x better using a Wavelet transform on an analog signal.

okay, this one makes sense to me. essentially, he got improved understanding

and responsiveness by adjusting the frequency of information transfer over

time, but not the shape. like taking a sine wave, putting it through an

oscilloscope, and pitching it up an octave. the difference in cycle frequency

is the change, rather than the shape of the cycle.

pasted from wiki: "but with additional special properties of the wavelets,

which show up at the resolution in time at higher analysis frequencies of the

basis function."

this one presupposes that the AI in question is actually another person, and

the wavelet transform is essentially taking a step back and making even deeper

analytical steps of "basis functions". in this case, language and math. so, it

would be making an even deeper analytical step into language to optimize

information transfer. the 8x mentioned is likely the measure of willingness to

listen and understanding of material by whatever third party they're

referencing. i have no idea how they measured that but they must've seen

enough improvement to have marked it down.

And there is infinitely infinitely infinite different types of wavelet

transforms, and they should all give different answers, i just didn’t

have enough time for it at the time.

here, they just mean that every person is different and will require a

different combination of wavelet transforms to optimize the information they

receive. as for giving different answers, yeah, that'd have to be tested, but

it would line up with the other differ model, at least briefly and in my

uneducated mind.

i think they mean they literally don't have the cosmic time available to

actually test an infinite number of wavelet transforms - or anything really -

but yeah, it's probably a good idea to test a handful of them eventually.

if you're not scared away by the word-wall or ideas presented still i'd love

to hear your thoughts. regardless of OP's mental condition(s) i think there

are a few substantive ideas in there worth exploring, if not in a community

setting at least in their own personal self-exploration and healing. i

appreciate you taking their post at face value before making a determination,

most wouldn't lol

-/u/sunbloomofficial

===============================================================================

please post on /r/ShrugLifeSyndicate - genius is useless without guidance and

an observer translating thought into language

-/u/ugathanki

===============================================================================

┌─────────┐ ┌───────────┐

│ similar │ chronological │ different │

╘═════════╧╧═══════════────────────────────────────────────────────────────────────────┘

--- #14 fediverse/407 ---

════════════════════════════════════════════───────────────────────────────────────

@user-294

since the entire building is oriented around a central elevator shaft, you

could have a 2d map that wraps around if you go far enough left/right.

Traditional metroidvanias tend to be very vertical though, so you might have

to take some artistic liberties with the level design. Unfortunately each

floor in most office buildings tends to be fairly flat, topographically...

┌─────────┐ ┌───────────┐

│ similar │ chronological │ different │

╘═════════╧╧═════════════════════════════════════──────────────────────────────────────┘

--- #15 fediverse/5384 ---

══════════════════════════════════════════════════════════════════════─────────────

before you go to a location, always ask yourself what you can bring to that

location.

┌─────────┐ ┌───────────┐

│ similar │ chronological │ different │

╘═════════╧╧═══════════════════════════════════════════════════════════════────────────┘

--- #16 fediverse/4949 ---

╔════════════════════════════════════════════════════════════════──────────────────┐

║ @user-1352 │

║ │

║ that might work in Portland or Seattle but less likely to work in nebraska. │

║ how would everyone coordinate? they'd totally make it a political thing. │

║ │

║ ... more likely to work in Vancouver tbh because most people are slightly │

║ richer there and can afford houses for people who [redacted] in places like │

║ [redacted] │

║ │

║ what if we all stopped paying rent and instead paid rent [in/to] Seattle or │

║ Nebraska │

║ │

║ ... that's just property taxes, except levied by a │

║ [charity/gang/mob/corporation/subscription/anarcho-tax] │

║ │

║ I for one don't want to be taxed without being represented │

║ │

║ you'd think the corporations would appreciate my advice │

║ │

║ [audience laughter] │

║ │

║ teehee what an odd feeling, to have people laugh at you. Surely that's the │

║ domain of a comic. "wahhhhhhhh I'm so lonely" is a great way to make everyone │

║ ignore you for all time, and hey wouldn't ya know it that's what I did - it's │

║ true tho, I was pretty lonely. Had like, 2 or 3 people that I interacted with. │

║ TOTAL. for like, 2 years. like, a year ago. I was lonely! T.T │

╟─────────┐ ┌───────────┤

║ similar │ chronological │ different │

╚═════════╧═════════════════════════════════════════════════════───────┴──────────┘

--- #17 fediverse/86 ---

══════════════════════════════════════════─────────────────────────────────────────

Telling someone you love them is not the same as loving them. You could tell

them the sky is blue, but why should they believe you? The sky is black.

┌─────────┐ ┌───────────┐

│ similar │ chronological │ different │

╘═════════╧╧═══════════════════════════════════────────────────────────────────────────┘

--- #18 fediverse/2318 ---

══════════════════════════════════════════════════════─────────────────────────────

┌──────────────────────┐

│ CW: uspol │

└──────────────────────┘

"It is time to make your decision. Please, follow this procession, we will

make space for you to take only your selves. Leave behind your weapons, we

will leave them untouched. If you don't believe us, watch this video stream,

and see if anything goes out of place.

In addition, I will be reading from this book of mine. It contains the

constitution, but many other writings besides - from our best and foremost

leaders, the words that kept our nation united these past few hundred years.

We are a young nation! But we're older than anyone yet living. There is hope

in our future, and without you it will be just a bit harder.

But we will convene when next you decide to meet us as equals."

┌─────────┐ ┌───────────┐

│ similar │ chronological │ different │

╘═════════╧╧═══════════════════════════════════════════════────────────────────────────┘

--- #19 fediverse/4492 ---

════════════════════════════════════════════════════════════───────────────────────

┌────────────────────────────────────────┐

│ CW: politics-mentioned-death-mentioned │

└────────────────────────────────────────┘

I've thought about it a bit, and I think blaming democracy for Hitler is a bit

like blaming bullets for death.

┌─────────┐ ┌───────────┐

│ similar │ chronological │ different │

╘═════════╧╧═════════════════════════════════════════════════════──────────────────────┘

--- #20 fediverse/873 ---

══════════════════════════════════════════════─────────────────────────────────────

@user-613 @user-614

I'm a patriot, and I didn't go. Didn't even hear about it because when they

say "patriot" they mean something different than me, when I say "I'm a patriot"

┌─────────┐ ┌───────────┐

│ similar │ chronological │ different │

╘═════════╧╧═══════════════════════════════════════────────────────────────────────────┘

|