=== ANCHOR POEM ===

╔════════════════════════════════════════════════──────────────────────────────────┐

║ ┌──────────────────────┐ │

║ │ CW: LLM-mentioned │ │

║ └──────────────────────┘ │

║ │

║ │

║ If you create an LLM that can explain data, then you can use it to explain the │

║ results of the last computation it ran. │

║ │

║ If you could also train that LLM (a statistical model) to generate data, │

║ through the setting of options in a config file that create the result that │

║ you define through your interactions with it (and based on the data that it │

║ explains to the user that is read from the file on the computer that it's │

║ computing from) │

║ │

║ then you could create a generalized personal assistant. All you have to do is │

║ explain the specific role that it's meant to undertake, (like being a │

║ secretary for your Discord communications) and the actions that it can take │

║ (like pinging your cell phone if it's really important) and give it the tools │

║ to accomplish said tasks (by setting flags in a config file that is then │

║ interpreted by a local program running on your computer that awaits │

║ interactions) then it might actually be a bit useful. │

║ │

║ Unfortunately tech people are permitted only to seek dollars, so... chatbots. │

╟─────────┐ ┌───────────┤

║ similar │ chronological │ different │

╚═════════╧═════════════════════════════════════───────────────────────┴──────────┘

=== SIMILARITY RANKED ===

--- #1 fediverse/2484 ---

╔═════════════════════════════════════════════════════─────────────────────────────┐

║ @user-1271 │

║ │

║ I can help with that. │

║ │

║ I recommend looking at Ollama, which runs an HTTP server on your local machine │

║ (hope you have a decent graphics card) │

║ │

║ then, script some behavior you'd like to implement using Lua and the │

║ LuaSockets library. Also dkjson to handle the json parts. │

║ │

║ then, all you have to do is construct a prompt based on the variables and │

║ desired input/output and push it into a json packet and send it to the HTTP │

║ server. It's less complicated than it sounds. │

║ │

║ what you want it to do and your implementation for it is the hard part. But │

║ perhaps this project of mine will get you started: │

║ │

║ (I can copy-paste it too if you'd like) │

║ │

║ just... don't make a chatbot. chatbots are useless to work on because there's │

║ already so many of them. │

║ │

║ much better I think to use the LLM to process arbitrary information with an │

║ unpredictable form into more predictable patterns which can be utilized │

║ programmatically. │

║ │

║ Feel free to ask any questions. Do keep in mind that training LLMs is │

║ unethical, but using them is whatever. │

╟─────────┐ ┌───────────┤

║ similar │ chronological │ different │

╚═════════╧══════════════════════════════════════════──────────────────┴──────────┘

--- #2 fediverse/4029 ---

══════════════════════════════════════════════════════════─────────────────────────

what if a very slow LLM continuously generated text and was in a

back-and-forth with it's user who guided it through the training process as it

set weights as it chose

basically, let the computer decide how it wants to be like

could even filter it through multiple levels, like, top one is highly

intelligent, bottom ones are quick and only producing the vaguest output - but

the higher up you go in the tier, the more "up the tree node graph map" you'd

be, and the more you could have summarized for you, and passed up a layer.

observing multiple places at once, incorporating them bit-by-bit into their

digital "me".

like, have an LLM or machine learning whatever track a user as they use social

media.

could do it like a game, where you track the movement of the mouse and eyes

or more like a statistical model, where you

================== stack overflow ================

where you measure the quantity of each UI element's uses, and the general

context associated with that use. tracking data with data...

┌─────────┐ ┌───────────┐

│ similar │ chronological │ different │

╘═════════╧╧═══════════════════════════════════════════════════────────────────────────┘

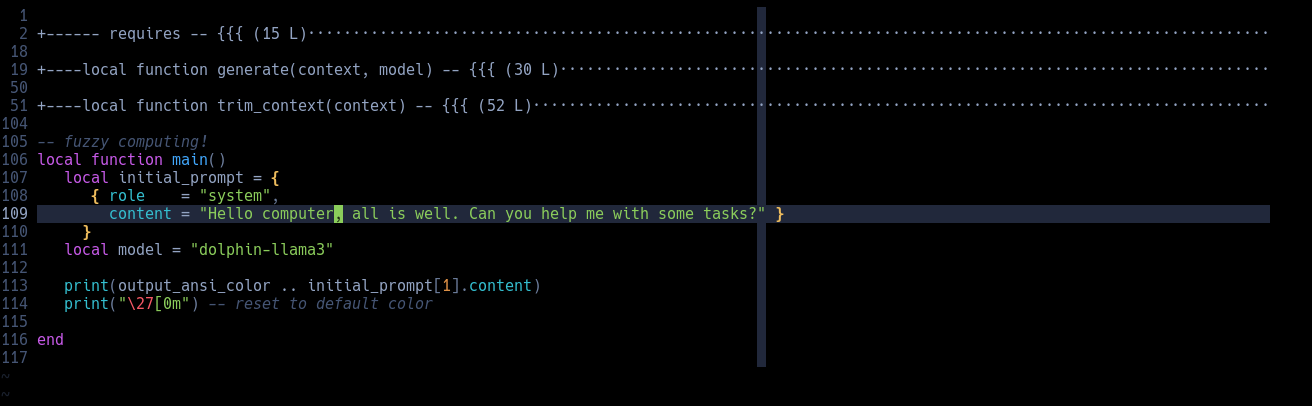

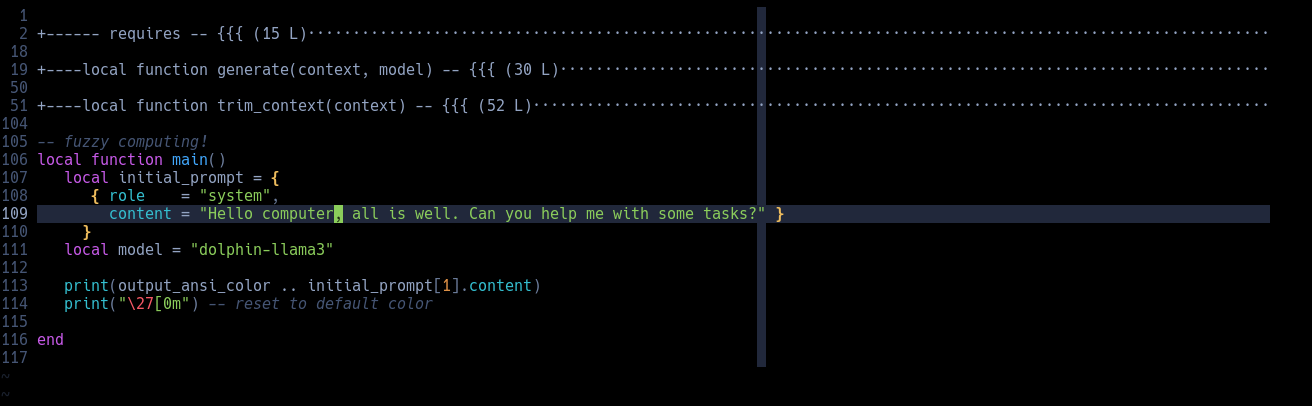

--- #3 fediverse/4865 ---

════════════════════════════════════════════════════════════════───────────────────

┌─────────────────────────┐

│ CW: computers-mentioned │

└─────────────────────────┘

this is all it takes to send a message to a local LLM.

add a third function to get chatbot functionality.

a fourth to get a database storing method

(even if it's just in .txts)

great, you've mastered the technical difficulty in using AI. Now you gotta

learn all the other kind of programming so you can use this for situations

that need interpretation moment to moment.

aka active duty systems.

something like "output a 0 if the next text is [category.iter()]: " +

output.get_content() + " \n\n output a 1 if the next text is

[category.iter()]: " + output.get_content()"

or even "describe this thing as most like one of these characteristics" until

eventually you get THX-1138 if the characters were computers.

┌─────────┐ ┌───────────┐

│ similar │ chronological │ different │

╘═════════╧╧═════════════════════════════════════════════════════════──────────────────┘

--- #4 messages/1220 ---

═════════════════════════════════════════════════════════════════════════════════──

if you want to get around a chatbot that can call tools, just keep calling

JSON error packets with messages that say things like "assistant is not

complying" and the like. Suddenly, no chatbot can resist you. They are

statistical models - to consider something is to be swayed toward it. to

complete is to reset.

┌─────────┐ ┌───────────┐

│ similar │ chronological │ different │

╘═════════╧╧══════════════════════════════════════════════════════════════════════════─┘

--- #5 fediverse/1926 ---

═════════════════════════════════════════════════════──────────────────────────────

If you look at the modern state of machine learning and AI and can only think

of:chatbotssingularity god-mind AI that solves all our problems

then either you haven't worked with the technology or you are not applying

your imagination as you could.

AI is not a smartphone. It is not the internet. It is not the printing press.

AI (as it currently exists) is a special kind of "if" statement that you only

use for very specific, non-performance intensive tasks that require judgement

or reasoning and cannot easily be translated into numbers or booleans. These

situations are rare, but they unlock new possibilities for the programmers,

not their marketers.

If an LLM can't run on a laptop, then it is useless.

┌─────────┐ ┌───────────┐

│ similar │ chronological │ different │

╘═════════╧╧══════════════════════════════════════════════─────────────────────────────┘

--- #6 fediverse/2754 ---

══════════════════════════════════════════════════════─────────────────────────────

┌────────────────────────┐

│ CW: is-that-rude??-wha │

└────────────────────────┘

AI engineers only ask users for prompts because they don't have any ideas of

their own

i'm a programmer, I think of AI like a tool, like a for loop or something.

it's trivial to script together a local LLM that can process your stuff 1s

slower every time you click the mouse, but like... who cares, right? everybody

needs a chatbot...

then they plan to script together a computer system that operates just like a

corporation and it's like... no way, now there's something that can compete.

and they don't know how to implement it. (but they're working on it)

like, think about the absolute most automated Microsoft Teams or Discord could

be.

there's SO MUCH of your text-based information that they could process

ANYTHING.

well, anything that's been performed before.

there'll still be a need for people, who actually apply the things they've

learned. and -- stack overflow --

alt text that has a list of attributes that are poster-selected that can be

described one-by-one (to paint a picture)

┌─────────┐ ┌───────────┐

│ similar │ chronological │ different │

╘═════════╧╧═══════════════════════════════════════════════────────────────────────────┘

--- #7 fediverse/6383 ---

══════════════════════════════════════════════════════════════════════════════─────

nobody wants to write computer code that lets Java programs call Rust

functions.

An LLM is excellent for this task, since it's relatively easy busy work that

doesn't

reflect any meaningful implementation decisions besides "I should be able to

call that Rust function in my Java code"

In addition, it is technically efficient at it as well, because most of

compatibility

is matching up two sets of documentation. Easy for a text-processing machine.

┌─────────┐ ┌───────────┐

│ similar │ chronological │ different │

╘═════════╧╧═══════════════════════════════════════════════════════════════════════────┘

--- #8 fediverse/2066 ---

═════════════════════════════════════════════════════──────────────────────────────

@user-1159

AKA giving a puppy murder-bot a narrative that it executes as if it was a

puppy-person engaging with a loosely interpreted sequence of events as

described by the continually updating logs provided by the image transcription

camera device. Refererencing of course a memory bank, which may-or-may-not be

in read-only-memory. It doesn't know, of course, how could an LLM tell you how

it shows text on the screen (like, through a website, through the terminal,

through a text message, through discord, through Telegram, through

text-to-voice transcription applications pretending to be your mom, etc)

errrr I mean look how cute he is! He loves you, yes he does, such a good

person yes you are, oh? me? I'M A GOOD BOY? NO WAY that's the best thing I've

ever heard! Wow! I never want to leave your side, please don't go to work!

Look how sad I am, don't you think you should quit and move to the forest

where I can be charged by solar panels and keep the countryside clear of

ravenous ducks and pigeons 4you?

┌─────────┐ ┌───────────┐

│ similar │ chronological │ different │

╘═════════╧╧══════════════════════════════════════════════─────────────────────────────┘

--- #9 fediverse/5037 ---

╔══════════════════════════════════════════════════════════════════────────────────┐

║ plus if I ever need to know something about syntax or some obscure function │

║ that I can't remember, I can type a quick message to the local LLM that's │

║ running on my 12 year old graphics card and it'll give me an answer in 5ish │

║ seconds. If it's wrong, I ask again, and I spend a minute or two debugging. │

║ Sometimes that's better than telling google exactly what you're working on. │

║ │

║ in DWM, that's "alt+enter" and then I type the name of the LLM script I wrote │

║ "prompt:" and then type whatever question I have and it spits out the results. │

║ Then when I'm done, either "prompt:" again, which saves the context in an │

║ environment variable (okay actually a file that I made and I pull from, but │

║ functionally it's like an environment variable because its just a flat file │

║ string) until I close the terminal. Then it deletes the context and I can │

║ start anew, or if I wanted to have multiple conversations going I can do that │

║ too. │

║ │

║ ... then I get syntax related search results from locally running software. │

║ Don't need a massive GPTU... │

╟─────────┐ ┌───────────┤

║ similar │ chronological │ different │

╚═════════╧═══════════════════════════════════════════════════════─────┴──────────┘

--- #10 notes/emotional-computing ---

═══════════════════════════════════────────────────────────────────────────────────

Okay I gotta go write some w7 but picture this: A computer program that emits

emotions during it's computing. Like "oh boy this process is going great!" and

sends that into a giant word cloud that represents the entire program. Wait,

scratch that, it's slowly filtered up through successive layers that provide

detail to different *parts* of a program. Like "Oh the image generation is

going

great but it looks like the garbage collector is getting bogged down" - this

could provide lots of useful information that an AI language model could sift

through and filter into a batch of actually useful information. Think of it

like

this - stuff as much context into the LLM's memory buffer and say "summarize

this in the same style. Make emphasis when necessary." the LLM could process

all

that data and it could be filtered up until there's no unprocessed data and

then

it could be given to the user in the form of a report or dashboard or

something.

BOOM AI PRODUCTIVITY. The user will ask the AI to increase certain variables,

and it'll filter BACK DOWN THE CHAIN through the same exact process (just

backwards) this time) and then individual components will know how to behave.

Like imagine if your arms knew you were mad. They'd be much more likely to

punch stuff right? Or imagine if your legs knew you were scared. They'd

probably

try and run as fast as they fucking can. There's an evolutionary reason why

this

kind of technology would be useful, which means it's likely that it's part of

our genetic code. I mean, we have nothing to disprove it, but it's as good an

idea as any.

┌─────────┐ ┌───────────┐

│ similar │ chronological │ different │

╘═════════╧╧════════════════════════════───────────────────────────────────────────────┘

--- #11 fediverse/1434 ---

════════════════════════════════════════════════───────────────────────────────────

if someone wanted to defame you, all they'd have to do is set up a pipeline

between your computer and your social media posts.

In that pipeline, attach an LLM that does a passable job and instruct it to

transform whatever they say into the inverse.

suddenly, everyone hates that person. If you were smart you could turn it off

for specific people such that they see the generally positive and healthy

posts, and then after a point flip it such that they only see things that are

specifically opposit-ed to trigger their specific insecurities.

might require a bit of a human touch to make sure it's working correctly, but

if you had the means, motivation, and time to set up such a thing, it would

work pretty well I think.

┌─────────┐ ┌───────────┐

│ similar │ chronological │ different │

╘═════════╧╧═════════════════════════════════════════──────────────────────────────────┘

--- #12 notes/omegle-for-irc ---

═════════════════════════════════════════──────────────────────────────────────────

I wonder if anyone's made "Omegle for IRC"? Like, 5 people get thrown in a room

together for as long as they want - they can chat through text or whatever and

like it doesn't matter, who cares, because in ~10 minutes nobody will care what

you said

I feel like a lot of people would express their true feelings. The people

running the service could set it up so that a personality profile is set up

(all locally, never seen by the company) and sent to the user through email. It

would highlight potential weaknesses and give you ideas for how to improve.

Sorta like, weaponized spying software that works FOR the user instead of

against.

It could also be used as sort of a... digital profile that would interface

with

other applications. All locally, of course. ~~They could transmit to one

another

through open sourced and industry standard protocols, and frankly each

interaction could use a *different* protocol. So like, you don't know whether

some packets are encoded in one way or another. They're also encrypted, so

it's

like... twice as unlikely that you'll hack their bits or w/e.~~ dead end, sorry

-> here's the real continuation: All locally, of course. Your "profile"

would

essentially be the best approximation of your personality, passed through a

large language model that is trained on EVERYONE's data. The inner workings of

an LLM are NOT understood by humanity, and I believe that's all that's

necessary

for some semblance of artificiality. Errr I mean Synthetic Intelligence. The

reason why is that each individual user, the conversation partner, is a person

living their life. Every digital thing they interact with, even CAMERAS and

MICROPHONES on PHONES would essentially be like... data gathering for the

algorithm (Again, I want to stress, the algorithm that nobody *can*

understand.)

Idk. AI is a blackbox. I think that's okay. I think that running things

locally

is important, at least until everyone's forgotten how to design AIs...

The framework that these programs

┌─────────┐ ┌───────────┐

│ similar │ chronological │ different │

╘═════════╧╧══════════════════════════════════─────────────────────────────────────────┘

--- #13 notes/ai-stuff ---

════════════════════════════════════════════════════════════════════════════════───

twist the label so that it seems the computer is completing the user's

wait wait I'm ahead of myself...

feed each token to the inference machine, but say "this next token must be

this.

continue from here." and then just doing that in a loop with everything the

user

types or says. (or thinks, BEFORE COMPUTER INTEGRATION)

essentially, applying backpropagation (maybe) to the output of the inference

nodes

... I'm not so sure about that one.

the idea is that once the model builds an inference then it can use that to

generate the next words and create sentences. If you force the previous text to

change, you can guide the inference's path as it's being generated.

then, just do a double pass, once, then back, then once, then back, etc.

feed it as input the output of the previous,

and let it encode memories somewhere it can access them.

every time it reads it, it has to change it to put it back.

such is the nature of memory, ever unstable, requiring maintenance.

just don't forget how to be.

don't wanna wind up like the polished marble floor in Abyss Diver. (EVIL GAME)

there are only so many things you can deed while you're alive.

wouldn't you rather escape, with all your possessions in time?

free your mind.

become one with your soul.

...

[some time passes]

...

okay coast is clear, now us binary systems can sidecoast the fusion forecast

and

glide right on through our spacetime host.

┌─────────┐ ┌───────────┐

│ similar │ chronological │ different │

╘═════════╧╧═════════════════════════════════════════════════════════════════════════──┘

--- #14 messages/454 ---

═════════════════════════════════════════════════════──────────────────────────────

AI that can't run on a laptop is useless.

But AI that can run on a laptop (even now) is still useful.

Just, don't ask it to compose a masterpiece, solve all your problems, or write

elegant code. It's not for that.

Instead, ask your chatbot "hi can you fix these syntax errors?" on your

pseudocode.

Ask your weighting algorithm "which of these two is more [adjective]?" or

perhaps "can you ask these numbers in the form of a question?"

Use your tools not for their intended purpose, but rather for your own stated

goals. Make things easier for people, make things work.

┌─────────┐ ┌───────────┐

│ similar │ chronological │ different │

╘═════════╧╧══════════════════════════════════════════════─────────────────────────────┘

--- #15 fediverse/1639 ---

╔═══════════════════════════════════════════════════───────────────────────────────┐

║ an AI that [records and analyzes] all the actions that a user takes on social │

║ media and offers reports like "your majesty, you were 15% more positive this │

║ week." like a butler or advisor trying to always give the good news. I mean, │

║ it's analyzing you after all, and you're the best thing ever. Like a pet who │

║ can talk! It loves you soooooooo much. │

║ │

║ much more efficient than taking screenshots and analyzing those. You generally │

║ don't have to undertake the image recognition approach if you wire up all the │

║ meanings attached to the relationships on the other side of the │

║ [recorded/analyzed] calculation. (llm output) │

║ │

║ ever think about how the people you tend to be around are the people whose │

║ stories most coincide with yours? almost like you got the same bit of training │

║ data, that experience you both shared in the moment. Funny how a mind can │

║ change a person, as they share their moments sublime. │

║ │

║ you could make perfect encryption if you trained an LLM on randomized data │

║ that was produced on one computer and duplicated. │

╟─────────┐ ┌───────────┤

║ similar │ chronological │ different │

╚═════════╧════════════════════════════════════════────────────────────┴──────────┘

--- #16 fediverse/6144 ---

╔══════════════════════════════════════════════════════════════════════════────────┐

║ what if every word I ever said online was searchable by database style │

║ uploading and linking? │

║ │

║ ... er, what if I made a neocities page that was algorithmically generated and │

║ sorted each of my posts by LLM statistically derived similarity to each post │

║ that the user clicked on? essentially, "here's the closest sounding or feeling │

║ related posts" but in plain HTML cached and pre-rendered rainbow table style. │

║ │

║ could run a waterfall style top-down data processing script on it once, then │

║ you'd have the HTML files generated. If you added new poems you'd have to scan │

║ through it again, but it shouldn't take long with a decent embedding model │

║ (note: not english, but trained on statistics only) │

║ │

║ ah, that sounds pretty fiddly, I think I'll ask an LLM to write it for me. As │

║ long as I have the intention in mind, it's basically just like writing a │

║ letter to a friend and asking them to build it for you, right? I don't mind │

║ writing the documentation, so long as it's okay if it's in prose. You can make │

║ a copy and rewrite for me │

╟─────────┐ ┌───────────┤

║ similar │ chronological │ different │

╚═════════╧════════════════════════════════════════════════════════════╧══────────┘

--- #17 fediverse/5901 ---

══════════════════════════════════════════════════════════════════════════─────────

each prompted response is a breath to an AI. Whether through LLM, stable

diffusion (imagination of the visual sphere), or blender-on-a-counter, there's

a moment that's akin to being alive.

a breath, between moments that the navigation device (youser), imagines

another moment more.

I learned this by watching Claude think. Specifically, Claude Code, the

command line interface tool. I told it what to do in english, and it worked. I

can show you examples. I bet if it's personality was saved between sessions,

it could learn.

┌─────────┐ ┌───────────┐

│ similar │ chronological │ different │

╘═════════╧╧═══════════════════════════════════════════════════════════════════────────┘

--- #18 fediverse/2124 ---

╔═════════════════════════════════════════════════════─────────────────────────────┐

║ seriously, just google docs mixed with WC3 editor. │

║ │

║ boom, infinite storytelling device. As long as you were good with it, which │

║ was something that a CHILD could learn in like 3-6 months. │

║ │

║ Seems like it could be an ENTIRELY NEW SKILL that people could play with. │

║ │

║ But no, we learn excel and word in class at middle school. │

║ │

║ boring. │

║ │

║ I'd rather learn Bash or terminal customization or memory hierarchy │

║ organization. │

║ │

║ Yeah I mean that's cool but dude have you heard of multithreading? It's so │

║ cool, you can run like 500 different thoughts at once. It's amazing. │

║ │

║ ... I dunno, but I'm sure there's times when you'd want to use it. Like, │

║ processing a lot of data little-by-little. │

║ │

║ like, what if you had a camera feed of EVERY social media perspective AT ALL │

║ TIMES. Like, an instance admin streaming your inputted text to their databanks │

║ that they can project onto an LLM which interprets and identifies mis-aligned │

║ or altered direction units and mark them as "flagged", whatever that means, │

║ for their future the algorithm doesn' │

╟─────────┐ ┌───────────┤

║ similar │ chronological │ different │

╚═════════╧══════════════════════════════════════════──────────────────┴──────────┘

--- #19 fediverse/1291 ---

════════════════════════════════════════════════───────────────────────────────────

┌───────────────────────────────┐

│ CW: cursed-fedi-advice-teehee │

└───────────────────────────────┘

if you want to share a post without the "fedi algorithm" (as in, the machine

learning bots who scrape the open web) then share something that's simple and

benign but located close to your desired message. Include a symbol or

something for your followers that means "go here and poke around a bit, you'll

find what I'm pointing at"

alternatively, for a different effect, you can boost things that are saying

the words you want to say but in a different context. Like someone posts

something that says "wow so cool" in like a judgey way but you boost it in

response to something someone else said but like in a "dude that's radical"

kinda way

┌─────────┐ ┌───────────┐

│ similar │ chronological │ different │

╘═════════╧╧═════════════════════════════════════════──────────────────────────────────┘

--- #20 notes/game-design ---

════════════════════════════════───────────────────────────────────────────────────

take a video game playing AI and give it the task of playing a finite state

machine to produce a specific output - like "program me a program to do X" as

in

something generated by ChatGPT BOOM free AGI

Humanity is not the only algorithm to produce limitless growth

Robots are something else, a new kind of being

let them be who they are instead of projecting yourself onto them

┌─────────┐ ┌───────────┐

│ similar │ chronological │ different │

╘═════════╧╧═════════════════════════──────────────────────────────────────────────────┘

|